note

This article was last updated on July 17, 2023, 2 years ago. The content may be out of date.

Previous post shows how to extract YouTube video assets using yt-dlp and implement a dynamic reverse proxy. This post details what the format YouTube is using.

First we’ll find out what formats YouTube uses to stream high quality videos in a browser.

Naive Solution

There is an audio html element. We could try to synchronize an audio and a video element.

However, it’s easier said than done as we have to consider not only play, pause, seek states, but also video and audio buffering, and it’s very easy to for these two elements to fall out of sync.

note

There was a non-standard mediaGroup property that was supposed to control the those related elements, but was not widely supported and eventually dropped.

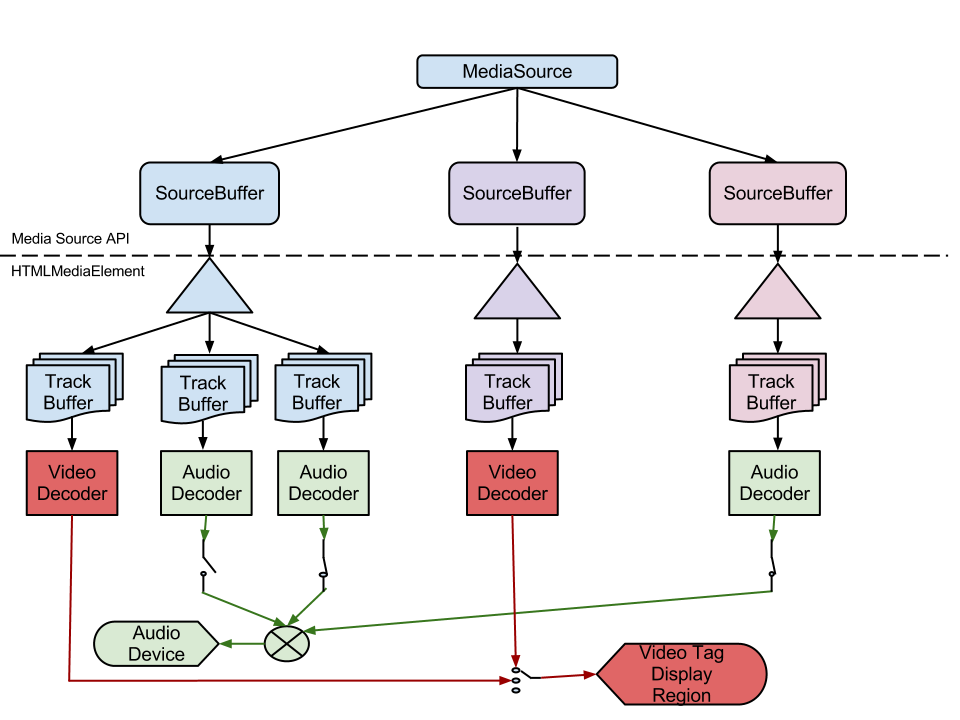

Media Source Extensions

Media Source Extensions (MSE) is an advanced feature of html5 that allows us to implement our media streaming. As long as the browser supports the codecs used by the media, we can feed videos and videos as bytes to the media source and the browser will handle the playing for us.

Naive Usage

This post demonstrates a basic example using MSE. Although it only shows how to play one video, we can adapt it to add audio to the MSE.

var vidElement = document.querySelector('video');

if (window.MediaSource) {

var mediaSource = new MediaSource();

vidElement.src = URL.createObjectURL(mediaSource);

mediaSource.addEventListener('sourceopen', sourceOpen);

} else {

console.log('The Media Source Extensions API is not supported.');

}

function sourceOpen(e) {

URL.revokeObjectURL(vidElement.src);

var videoMime = 'video/webm; codecs="vp9"';

var mediaSource = e.target;

var videoBuffer = mediaSource.addSourceBuffer(videoMime);

var videoUrl = 'droid.webm';

var audioMime = 'audio/webm; codecs="opus"';

var audioBuffer = mediaSource.addSourceBuffer(audioMime);

var audioUrl = 'droid.webm';

fetch(videoUrl)

.then(function (response) {

return response.arrayBuffer();

})

.then(function (arrayBuffer) {

videoBuffer.addEventListener('updateend', function (e) {

if (!videoBuffer.updating && mediaSource.readyState === 'open') {

mediaSource.endOfStream();

}

});

videoBuffer.appendBuffer(arrayBuffer);

return fetch(audioUrl)

})

.then(function (response) {

return response.arrayBuffer();

})

.then(function (arrayBuffer) {

audioBuffer.addEventListener('updateend', function (e) {

if (!audioBuffer.updating && mediaSource.readyState === 'open') {

mediaSource.endOfStream();

}

});

audioBuffer.appendBuffer(arrayBuffer);

});

}

However, this example will cause browser to buffer all the data before it will play the video, which is not what we want. We want the video to play as it is being buffered.

Apparently, we have to parse the video and audio to know the keyframes and buffer selected parts then feed them to MSE to achieve what we want.

Getting More Information about Media Assets

yt-dlp has a --write-pages option that will write intermediary pages to help debug problems. For YouTube, it will actually extract json info about videos. From the downloaded jsons, we can find something like this:

{

"initRange": {

"start": "0",

"end": "219"

},

"indexRange": {

"start": "220",

"end": "4361"

}

}

It’s probably related to video keyframes. And indeed, Innertube API shows exactly how this info is used.

--write-pages corresponds to write_pages in the parameter.

Extracting Info and Generating MPD Manifests

We can call the yt-dlp service defined in the previous post like this:

type ydlRequest struct {

Url string `json:"url"`

WritePages bool `json:"write_pages"`

}

type ydlJson struct {

Duration int `json:"duration"`

RequestedFormats []struct {

FormatID string `json:"format_id"`

Url string `json:"url"`

} `json:"requested_formats"`

}

func callYdl(id string) {

conn, _ := net.Dial("unix", "/path/to/yt-dlp.socket")

var r ydlRequest

r.Url = "https://www.youtube.com/watch?v=" + id

r.WritePages = true

_ = json.NewEncoder(conn).Encode(r)

var yj ydlJson

_ = json.NewDecoder(conn).Decode(&yj)

_ = conn.Close()

// yj contains information about assets url

}

format_id is necessary because we need it to find out other media information in the dumped json.

The filename of the dumped json follows the format like this:

${video id}_https_-_www.youtube.com_youtubei_v1_playerkey*

We can use filepath.Glob to find the dump json and extract the relevant information. The relevant information can be defined as:

type dumpJson struct {

StreamingData struct {

AdaptiveFormats []struct {

Itag int `json:"itag"`

MimeType string `json:"mimeType"`

Bitrate int `json:"bitrate"`

Width int `json:"width"`

Height int `json:"height"`

Fps int `json:"fps"`

AudioSampleRate string `json:"audioSampleRate"`

AudioChannels int `json:"audioChannels"`

InitRange struct {

Start string `json:"start"`

End string `json:"end"`

} `json:"initRange"`

IndexRange struct {

Start string `json:"start"`

End string `json:"end"`

} `json:"indexRange"`

} `json:"adaptiveFormats"`

} `json:"streamingData"`

}

Its itag is the same as format_id from the output of yt-dlp.

We can generate mpd manifests using the info we extracted before. The implementation is left as an exercise.

Playing MPD Manifests

There are two dash libraries for browsers, shaka-player and dash.js. Both are very easy to use.

For video player software, mpv and its derivatives and kodi home theater all support playing mpd.

info

For browser usage, it’s perfect. However, video player software may have problems buffering. This problem will be discussed in the next post.